Sport and performance art involves skilled movements which must be learned and improved. For example, pitchers throw baseballs, competitive cyclists sprint out of the saddle, and ballet dancers pirouette. Experts who have mastered these movements perform them automatically, effortlessly, and without much conscious awareness of their bodies (but see Toner et al. 2016). But learning or improving a movement requires compensatory adjustments based on bodily feedback: body position must be sensed, deviation from the ideal registered, and adjustments made. The problem is that many athletes and performance artists have poor bodily awareness, making learning new movements a struggle.

Here I want to discuss why bodily awareness is often poor and some potential ways of improving it. While most athletes, performance artists, and coaches are well aware of the literature on topics like aerobic conditioning, strength training, and nutrition, bodily awareness and its role in movement execution is rarely discussed (at least in the sports scene). In what follows I draw together work from philosophy, neuroscience, and engineering to present a holistic picture of what we know about bodily awareness.

Phenomenology

Athletes and performers can only make deliberate adjustments to body position based on conscious bodily feedback. I can only improve my performance of a yoga pose (for example) if I feel how my body is out of position. Hence a natural place to start is with our subjective bodily experience.

There is something it’s like for you to inhabit, be aware of, and feel your own body (Nagel 1974). This “phenomenology” (as it’s called) consists of an array of bodily sensations, such as the pain of sprained tendons, the ache of fatigued muscles, the “burn” of lactic acid, or the felt pressure of touch. But the phenomenology of bodily awareness (including the awareness of movement and body position) consists of more than just these common sensations. Since I’m a cyclist, I’ll use pedalling a bicycle as an example.

As I pedal, my bodily experience is private. You cannot observe it from the “outside”; I have the experience from “inside” my own inner perspective. As just mentioned, my experience includes an array of purely private sensations, but within my experience I also notice the objective physical world. I feel not just my sensations, but also the pedals under my feet and the changing position of my own body. So the first upshot is that bodily experiences reveal to us our bodies and the implements we’re manipulating with our bodies (Matthen forthcoming).

Consider a similar (hopefully familiar) case: driving a car. As you drive a car, your bum in the seat and your hands on the steering wheel, you have tactile sensations (a felt sense of pressure) localized in your hands and legs. But you also, if you pay attention, can feel your hands and legs themselves, as well as the car itself. For example, if you lift your foot off the gas you can feel its location and whether it’s hovering over the gas or the brake. If the car loses traction, you can feel the wheels slide across the road. This sense of your foot’s location, or of the car sliding, is not just some localized tactile sensation in your hands or legs.

As I pedal I’m struck by an overwhelming rush of bodily sensations: warm, dull twinges in my legs, a diffuse felt sense of effort, etc. But when I try to focus on these sensations, to describe them, I find myself focused on either my physical body itself, or the pedals under my feet (Moore 1903). Conversely, if you asked me how I manage to feel the pedals or my body (i.e., asked me through which sensations I felt them), I wouldn’t have an answer. For the most part, I don’t feel my body or the pedals through any distinct bodily sensations. For example, I always know when my right foot is at the bottom of the pedal stroke, but there are no felt sensations in my legs which correlate with that leg position, or which I can use to know my leg position (see also de Vignemont 2018, section 1.1). Hence the second upshot is that we don’t achieve bodily awareness by focusing on our raw bodily sensations.

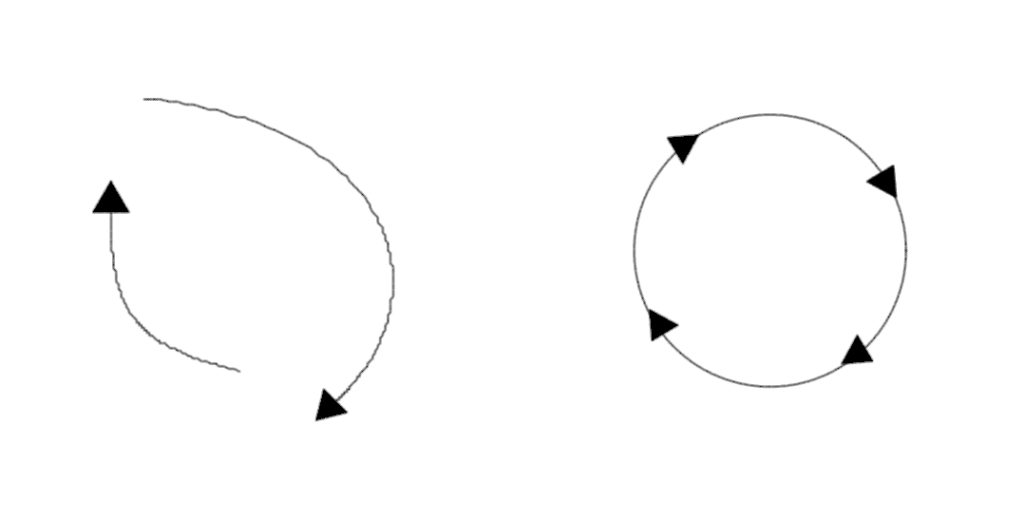

Curiously, if I just focus on how it feels my legs are moving through the pedal stroke, it feels to me as if the portion of the movement through which I’m applying force to the pedals (the downstroke) is much longer than the rest. I hardly at all feel my legs and feet as they move through the upstroke. Also interesting is that if I focus on the pedals (not my body position), I accurately feel their motion through a circle. The third upshot: experience of body position is distorted by one’s felt sense of effort, but experience of external implements can be more accurate.

As I pedal it is possible to break transparency and correct distortion. For example, by pulling up on the pedals through the upstroke I come to bring into focus more the motion of my leg and foot through that portion of the movement. By pulling my right knee to the outside I elicit new sensations indicating that my leg is out of position. By eliciting new bodily sensations in this way and noticing how those new sensations covary with the position of the pedals (which I accurately perceive), my experience of my body becomes more accurate. I can feel not only the pedals, but also my feet and legs, moving through circles. Fourth upshot: by exploring how my bodily sensations depend on my movement and comparing that with other accurate input on body position, my bodily experience’s accuracy can be improved.

Neurobiology

Although the above observations are just based off my own experience of pedaling, they comport well with what we know about the neurobiological underpinnings of bodily awareness.

Proprioception is our body’s sense for its own position. It’s facilitated by mechanosensory neurons (called proprioceptors) in our muscles, tendons, and joints (Tuthill and Azim 2018, R194). These proprioceptors include muscle spindles in skeletal muscles which encode information about muscle length, Golgi tendon organs located between muscles and tendons which encode muscle force production and limb load, and joint threshold detectors embedded in joint capsules and ligaments which encode when joints reach specified angles, such as the limits of the joint’s range of motion (ibid, R196).

While our proprioceptors do send information via interneurons in the spinal cord to our brains, they also talk directly to motor neurons innervating nearby muscles (Tuthill and Azim 2018, R197, R200-201). The upshot is that while proprioceptors encode a rich array of information about body position, much of that information is kept in local neural circuits and never gets to the brain.

The brain itself keeps track of body configuration in a number of body models: neural activity in various brain regions which encodes or represents how the brain thinks the body is positioned (Naito et al. 2016). These models guide the selection of motor commands for executing movements, but also presumably underlie our conscious bodily experience. It’s natural to think that the brain builds these models based on proprioceptive feedback, but this is mostly not the case. Instead, many of these models are built from copies of motor commands (“efference copies”): the brain takes its current representation of the body and updates it based on the most recent set of efferent signals from the motor cortex (Miall and Wolpert 1996).

There are several reasons why it makes engineering sense for the brain to use these forward models (as they’re called), but I’ll mention just one. Feedback from proprioceptors takes time to reach the brain and be processed, anywhere between 30 and 300ms (Miall and Wolpert 1996, 1269; Tuthill and Azim 2018, R200). This is often too long to be of use for on-the-fly motor control. Forward models provide a real-time substitute.

What does the brain do with feedback from proprioceptors? For the most part it suppresses it. Roughly, feedback which matches what’s predicted by the forward model is suppressed and only unusually strong and unexpected feedback is left. That feedback is then used to update and recalibrate the model (Tuthill and Azim 2018, R199-200). For rhythmic motions (e.g., walking, pedaling) the ratio of incoming feedback to outgoing motor commands should be low (low proprioceptive feedback), but for complex and dynamic sport movements this ratio needs to be high, as these movements require more calibration (Tuthill and Azim 2018, R198).

The brain’s body models (at least those constructed based on signals from proprioceptors) encode spatial information about body position in terms of joint angles and other motion-related quantities (Bermudez 2005; Sarlegna & Sainburg 2009). This means that our body models don’t encode body position in a format that’s easily translated into the spatial coordinates we use to represent what we see and hear (see also Matthen forthcoming). This is why movement accuracy deteriorates when you are not able to see your own limbs (Sarlegna & Sainburg 2009, 321). Further, there’s even some evidence that proprioception-based body models are systematically inaccurate, e.g. distorting the spatial dimensions of our limbs (Wong 2014; 2017). Hence, accurate body movements depend on additional information from other sensory modalities (like vision) which do place the body within an objective spatial representation of the environment (see also de Vignemont 2014; Wong 2014; 2017).

While some body models in the brain (including the one constructed between the primary motor cortex, brain area M1, and its targets in the cerebellum) are perhaps built exclusively based off copies of motor commands, others (including the ones constructed across specialized parietal regions and their targets in the cerebellum) calibrate these motor-command based predictions by integrating them with information about the body from other sensory sources, especially vision and vestibular feedback (Sarlegna & Sainburg 2009; Ishikawa et al. 2016; Naito et al. 2016; Tuthill and Azim 2018). These partietal regions also seem to do the work of transforming between the joint-relative encoding of proprioception and motor commands and the objective spatial coordinates of vision. Proprioceptive feedback is integrated even earlier (e.g., in the spinal column) with feedback from haptic mechanosensors (touch receptors) and nociceptors (pain receptors). Not only are the neural representations of our body constructed by our brain multimodal, but our conscious bodily experience itself is, it seems, also constitutively multimodal (de Vignemont 2014). That is, our subjective bodily experience is not based on just proprioceptive information, but on an integration of information from proprioception, vision, and other sensory pathways.

Connecting neurobiology and phenomenology

The above facts about neurobiology provide nice explanations for the phenomenological observations made at the start. Why is it so hard to attend to bodily sensations? Because much of our proprioceptive feedback is kept in local circuits that don’t reach the brain, the brain is largely working to suppress the rest of our proprioceptive feedback, and what feedback does reach the brain is too delayed to use.

Why are we able, nonetheless, to readily (and directly) experience our bodies’ position and movement? Because the brain is constantly modelling body position and movement through efferent copies of motor commands, integrated with information from other sensory modalities.

Why is this experience of the body distorted? Because (at least some of) the body representations constructed by the brain grounding this experience are based off motor commands and encoded in a coordinate system which doesn’t inherently place the body in a reference frame describing the external physical world. These representations also seem to distort the spatial dimensions of our limbs. Further, what proprioceptive signals do make it through to the brain are either unusually strong, or unexpected.

Perhaps most important: why are we able to correct distorted experience of body position and motion by comparing proprioceptive sensations to more accurate sensory input? Because the brain’s body representation can be corrected based on the integration of multiple sources of feedback. For example, while pedalling I can associate the limited proprioceptive feedback I do receive with the known feedback that my foot is moving through a circle, thereby more accurately translating that proprioceptive feedback into the spatial coordinates of the external world.

Sonification

Let’s summarize: (1) If an athlete or permance artist relies only on conscious proprioceptive feedback (“how their body feels”), they will do poorly at tracking their body position and movement. (2) Bodily experience isn’t like the traditional exteroceptive modalities (e.g., vision). It isn’t based on a representation constructed from sensory (proprioceptive) input. Instead, it starts with motor output and modulates that with input from other sensory modalities (e.g., vision). (3) So you can’t rely on how your body “feels”, as there isn’t much to feel. (4) You can do a little better, like I did tracking my pedaling, by learning to compare proprioceptive sensations with other sources of information.

But what if you could augment your proprioceptive feedback with additional information about your body position? What if you could recover all the proprioceptive information that’s being suppressed, or receive position information that’s not normally encoded by proprioception at all? Vision allows you to do this, e.g. by watching yourself in a mirror. But it’s often not practical. Instead, what we want is sonification.

As Schaffert et al. (2019, 4) explain, “Sonification, as the transfer of movement data [collected via artificial body sensors] into non-speech audio signals, refers to the mapping of physiological and physical data onto psychoacoustic parameters (i.e., loudness, pitch, timbre, harmony and rhythm) in order to provide on- and/or offline access to biomechanics information otherwise not available (…).” So, in movement sonification, some physical parameter (e.g., hand position, limb velocity, muscle force) is systematically mapped into some acoustic parameter (e.g., loudness, pitch) that’s played for the subject. For example, a constant tone is played for a subject, and that tone’s volume varies with the height of their hand: louder as the hand is raised, softer as it’s lowered.

Although work on sonification is still in many ways just beginning (especially in applied sports contexts), existing studies do suggest that it holds promise as a method for improving bodily awareness. As Schaffert et al. (2019, 4) summarize: “Investigations examining the use of sonification in elite or high-performance sports have demonstrated that the presentation of artificially generated sounds optimize movement control and execution (e.g., stability, velocity, pattern and force symmetry) in sports such as swimming (Chollet et al., 1988, 1992), rowing (Schaffert et al., 2010, 2011; Schaffert and Mattes, 2011, 2015b, 2016), and cycling (Sigrist et al., 2016; Schaffert et al., 2017).” For example, Effenberg et al. (2016) provide a rigorous study on rowing technique showing that novices training with sonification improve their technique faster and more than controls without that sonficiation, and also retain that technique after sonification.

Given the integrative, multimodal character of proprioceptive processing, it should be no surprise that augmenting proprioceptive feedback via sonification could improve sport movement learning (Effenberg et al. 2016). There’s already evidence that natural movement sounds play a role in sport movement control (e.g., Kennel et al. 2015). So clearly auditory input can be, and is, used by the brain for motor control.

There are two broad methods for sonification: (1) directly convert some movement parameter (e.g., position, velocity, force) into a covarying audio signal (e.g., Schaffert et al. 2017); (2) convert the deviation of that movement parameter from the ideal into a covarying audio signal (e.g., Godbout and Boyd 2010). While (1) gives the athlete relevant information they wouldn’t have otherwise, (2) allows them to train the movement by attempting to minimize their auditory feedback (signalling that they’ve minimized the deviation of their movement from the ideal).

An example and a proposal

In a small pilot study, Schaffert et al. (2017) mapped the real-time torque measurements from a Wattbike onto a pitch-varying tone, so that higher torque corresponded to higher pitch. They showed not only that subjects could intuitively distinguish effective from ineffective pedal strokes based on the audio feedback, but also that subjects (n=4) given the audio feedback reported finding it helpful for improving pedalling effectiveness and left/right pedal balance. Subjects also reported that it revealed to them aspects of their pedalling of which they weren’t aware before. In theory, subjects could achieve better pedal effectiveness and left/right balance by modulating their effort so as to produce a constant tone (no variation in pitch); rhythmic variations in pitch indicate “dead spots”, negative force on the upstroke, and/or left/right imbalance.

The polar display on the Wattbike provides the same functionality (visualization of torque, as opposed to sonification), but has several drawbacks compared with sonification (Schaffert et al. 2017, 42). (1) The polar display only updates once per second, not in real time. Further, visual processing is not as temporally precise as auditory processing, and so real-time visual feedback of torque is likely not as helpful (due to visual processing limitations) for updating body models and improving pedalling effectiveness. (2) Visual processing of information on the polar display is very demanding of attention and leaves few attentional resources available for other tasks while on the bike, while auditory processing of the sonification is less demanding. (3) Relatedly, there would be no way to implement the polar display on a bicycle (ridden on the road or track), as riding a free bicycle precludes constant monitoring of a visual display (but doesn’t preclude listening to a tone). Similar constraints apply for most sport and performance art applications: since visual attention must be focused on the relevant task (e.g., piloting a bike), visualizations of proprioceptive information cannot be used (but sonifications can be).

The Schaffert et al. (2017) study provides one simple example of sonification, but there is significantly more potential. Consider, for example, the standing start in cycling:

A “standing start” is when a rider starts a bike (for a race, time trial, or practice effort) from a dead stop, with the bike being held at the start either by a human or mechanical holder. The goal, of course, is to get the bike up to speed as quickly as possible. Effective standing starts are a complex movement requiring coordination between the legs, hips, shoulders, and arms. As can be seen in the above photos (or this video), the rider launches by pushing their hips back high and behind the saddle, then throwing the hips forward towards the handlebars in a way that leverages their body weight up and in front of the pedals.

Athletes can be shown demonstrations of proper technique and given crude verbal instructions (e.g., “imagine you’re thrusting your hips forward like doing a deadlift”), but translating these demonstrations and instructions into proper motor commands is very difficult. Mastering the technique requires many hours of practice. As we’ve been discussing, the problem is a lack of (and impoverished) bodily awareness: during the standing start athletes receive little conscious proprioceptive feedback of their position, this feedback is delayed, and it’s delivered to them in a spatial format which is difficult to translate into the spatial representations afforded by seeing demonstrations of the movement.

A proposal is to use high-fidelity inertial sensors (e.g., Lapinski et al. 2019) to capture the motion of an experienced rider performing a standing start. The same sensors could then be used to capture the motion of an inexperienced rider. The differences between the two motion captures could then be sonified into a tone, so that tone volume or pitch correlates systematically with the difference between them. With such a setup a rider could train their standing start by attempting to minimize tone volume or pitch on each practice effort. Assuming real-time millisecond feedback, such a setup could even allow for real-time position adjustments during each effort: e.g., loud or high tones would prompt real-time position changes over the course of the effort to minimize those tones.

Explaining the benefits of sonification

It’s well established that at the neurological level there is integration of information from auditory input into the brain’s body model (Effenberg et al. 2016). For example, there are projections from auditory processing sites in the brainstem (cochlear nucleus) down into sensorimotor circuits in the spinal cord, and projections from auditory processing sites in the cortex to the primary and premotor cortex (Schaffert et al. 2019, 8-9). It’s known that activity in these auditory areas can entrain the receiving motor areas, e.g. presumably facilitating rhythmic movements; it’s also known that natural movement sounds lead to associated activity in the related motor areas (ibid). More speculatively, at the functional level it seems that the kind of information specifically provided by sonification is able to be integrated into motor representations (or perhaps body models), thereby filling in gaps from sparse proprioceptive feedback (ibid, 9-10).

At the phenomenological level, the benefits of sonification should also be clear. Conscious proprioceptive feedback (bodily sensations) are both sparse and transparent. When an athlete is asked to “focus on the position they feel like they’re in”, there simply aren’t many bodily sensations for them to attend to. Sonification provides a way to convert relevant bodily information into a consciously accessible stream of sensory input.

A compelling and well-supported idea is that we perceive distal objects in the environment through the way the proximal sensory stimulation from them covaries with movement (Noe 2004). For example, objects in the environment project 2D patches of light onto our retina, but we nonetheless manage to see (visually perceive) the 3D objects themselves through the way those 2D patches of light change depending on the angle and distance of viewing.

This doesn’t seem to apply to perceiving our own body. Because proprioceptive input is suppressed, normally our “perception” of our own body depends on the body representation constructed by the brain based on efferent copies of motor commands. But sonification affords a new stream of sensory information through which we can come to perceive our bodies. By noticing how the acoustic input from sonification changes depending on our motion, we can come to perceive our body’s position and the effects of our movements on that position. Compare: if I feel pain sensations in my knee, I can come to perceive the damage and swelling causing those sensations by moving my knee and exploring how my pain covaries with movement; I come to not only “feel pain” diffusely, but to perceive my torn or swollen ligaments. The way I improved the accuracy of my bodily experience while pedalling, mentioned above, is an example of this. I was able to accurately perceive the shape of my leg movement by exploring how proprioceptive bodily sensations changed, depending on how I altered my movement, and comparing those sensations to my accurate perception of the pedal’s movement. Sonification of body movement would afford a richer set of sensory input from which to do this sort of sensorimotor exploration.

Practical tips

Poor bodily awareness in athletes and performance artists derives from (a) the suppression of proprioceptive feedback and its localization to peripheral neural circuits, (b) which seems to lead to a lack of usable conscious bodily sensations, (c) and attention to a (distorted) body model that’s based on motor commands and not encoded in the objective spatial representations of vision.

Athletes and performance artists can improve their bodily awareness by (a) learning to draw from, and integrate, multiple sources of information about their body position and movement (e.g., visual, auditory, haptic, etc), and (b) integrating experiences of external objects (e.g., a barbell or bike pedals), the location of which carries information about the body, that are more accurately represented in the brain in objective spatial coordinates. (c) Attention should also be paid to how sensations and other feedback change with body movement, as accurate conscious perception of physical objects (including the body) depends on those things being revealed through motion-dependent changes in sensory input.

The supplementation of our sparse proprioceptive feedback through sonification can further improve an athlete’s bodily awareness by affording them valuable sensory information about body position which is either not registered at all by our proprioceptors, is lost to local circuits, or is suppressed.

References

- Bermudez, J.L. (2005) “The phenomenology of bodily awareness” In: Woodruff Smith, D. And Thomasson, A.L. (eds) Phenomenology and Philosophy of Mind. Clarendon Press, Oxford. pp. 295-316.

- Chollet, D., Madani, M., and Micallef, J.P. (1992) “Effects of two types of biomechanics bio-feedback on crawl performance” In: MacLaren, D., Reilly, T., and Lees, A. (eds) Biomechanics and Medicine in Swimming. E & FN Soon Press, London. pp. 57-62.

- Chollet, D., Micallef, J.P. and Rabischong, P. (1988) “Biomechanical signals for external biofeedback to improve swimming techniques” In: Ungerechts, B., Wilke, K., and Reischle, K. (eds) Swimming Science V. International Series of Sport Sciences, Vol. 18. Human Kinetics Books, Champaign, IL. pp. 389-396.

- Effenberg, A.O., Fehse, U., Schmitz, G., Krueger, B., and Mechling, H. (2016) “Movement sonification: Effects on motor learning beyond rhythmic adjustments” Frontiers in Neuroscience, 10, 1-18.

- Godbout, A. and Boyd, J.E. (2010) “Corrective sonic feedback for speed skating: a case study” in Proceedings of the 16th International Conference on Auditory Display, Washington, DC, 23-30.

- Ishikawa, T., Tomatsu, S., Izawa, J. And Kakei, S. (2016) “The cerebro-cerebellum: Could it be loci of forward models?” Neuroscience Research, 104, 72-79.

- Kennel, C., Streese, L., Pizzera, A., Justen, C., Hohmann, T., and Raab, M. (2015) “Auditory reafferences: The influence of real-time feedback on movement control” Front. Psychol., 6(69), 1-6.

- Lapinski, M., Brum Medeiros, C., Moxley Scarborough, D., Berkson, E., Gill, T.J., Kepple, T., and Paradiso, J.A. (2019) “A wide-range, wireless wearable inertial motion sensing system for capturing fast athletic biomechanics in overhead pitching” Sensors, 19(3637), 1-15.

- Matthen, M. (Forthcoming) “Dual structure of touch: The body vs. peripersonal space” In: de Vignemont (ed.) The World at Our Fingertips. Oxford: Oxford University Press.

- Miall, R.C. and Wolpert, D.M. (1996) “Forward Models for Physiological Motor Control” Neural Networks, 9(8), 1265-1279.

- Moore, G.E. (1903) “The refutation of idealism” Mind, 12, 433-453.

- Nagel, T. (1974) “What is it like to be a bat?” Philosophical Review, 83, 435-450.

- Naito, E, Morita, T. And Amemiya, K. (2016) “Body representations in the human brain revealed by kinaesthetic illusions and their essential contributions to motor control and corporeal awareness” Neuroscience Research, 104, 16-30.

- Noe, A. (2004) Action in Perception, The MIT Press, Cambridge, MA.

- Sarlegna, F.R. and Sainburg, R.L. (2009) “The Roles of Vision and Proprioception in the Planning of Reaching Movements” In: Sternad, D. (eds) Progress in Motor Control. Advances in Experimental Medicine and Biology, vol 629. Springer, Boston, MA. pp. 317-336.

- Schaffert, N., Braun Janzen, T., Mattes, K., and Thaut, M.H. (2019) “A review on the relationship between sound and movement in sports and rehabilitation” Frontiers in Psychology, 10, 1-20.

- Schaffert, N., Godbout, A. Schlueter, S., and Mattes, K. (2017) “Towards an application of interactive sonification for the forces applied on the pedals during cycling on the Wattbike ergometer” Displays, 50, 41-48.

- Schaffert, N. and Mattes, K. (2011) “Designing an acoustic feedback system for on-water row training” Int. J. Comput. Sci. Sport, 10, 71-76.

- Schaffert, N. and Mattes, K. (2015b) “Interactive sonification in rowing: an application of acoustic feedback for on-water training” IEEE Multimed., 22, 58-67.

- Schaffert, N. and Mattes, K. (2016) “Influene of acoustic feedback on boat speed and crew synchronization in elite junior rowing” Int. J. Sports Coach. 11, 832-845.

- Schaffert, N., Mattes, K., and Effenberg, A.O. (2010) “Listen to the boat motion: acoustic information for elite rowers” in Proceedings of the ISon 2010, 3rd Interactive Sonification Workshop, Stockholm, 31-38.

- Schaffert, N., Mattes, K., and Effenberg, A.O. (2011) “An investigation of online acoustic information for elite rowers in on-water training conditions” J. Hum. Sport Exerc., 6, 392-405.

- Sigrist, R., Fox, S., Riener, R. And Wolf, P. (2016) “Benefits of crank movement sonification in cycling” Procedia Eng., 147, 513-518.

- Tuthill, J.C. and Azim, E. (2018) “Proprioception” Current Biology, 28, R194-R203.

- Toner, J, Montero, B.G. and Moran, A. (2016) “Reflective and prereflective bodily awareness in skilled action” Psychology of Consciousness: Theory, Research, and Practice, 3(4), 303-315.

- de Vignemont, F. (2014) “A multimodal conception of bodily awareness” Mind, 123(492), 989-1020.

- de Vignemont, F. (2018) “Bodily awareness”, The Stanford Encyclopedia of Philosophy, Spring 2018 edition.

- Wong, H.Y. (2014) “On the multimodality of body perception in action” Journal of Consciousness Studies, 21(11-12), 130-139.

- Wong, H.Y. (2017) “On proprioception in action: Multimodality versus deafferentation” Mind and Language, 32(3), 259-282.