Michael Barkasi

About me

I’m a staff scientist for Oviedo Lab, an electrophysiology lab in the department of neuroscience at Washington University in St. Louis (WashU). I’m also an associate member of the Centre for Philosophy of Memory, researching the role of memory in perception and the phenomenology of episodic memory. Previously I was a lecturer in the department of philosophy and the philosophy-neuroscience-psychology program at WashU, a postdoctoral visitor with Harris Lab at York University studying the integration of auditory and proprioceptive feedback in the control of movement, and a postdoctoral research fellow at the Network for Sensory Research in the Department of Philosophy, University of Toronto. I completed my Ph.D. in philosophy at Rice University (Houston, Texas), where I also was one of the coauthors of the program’s textbook in mathematical logic.

Computational Neuroscience

My projects

- Neuromorphic circuits for speech recognition inspired by models of auditory edge detection.

- Molecular mechanisms behind auditory cortex lateralization, especially developing models using spatial transcriptomics data.

- Lateralized recurrent pathways in auditory cortex.

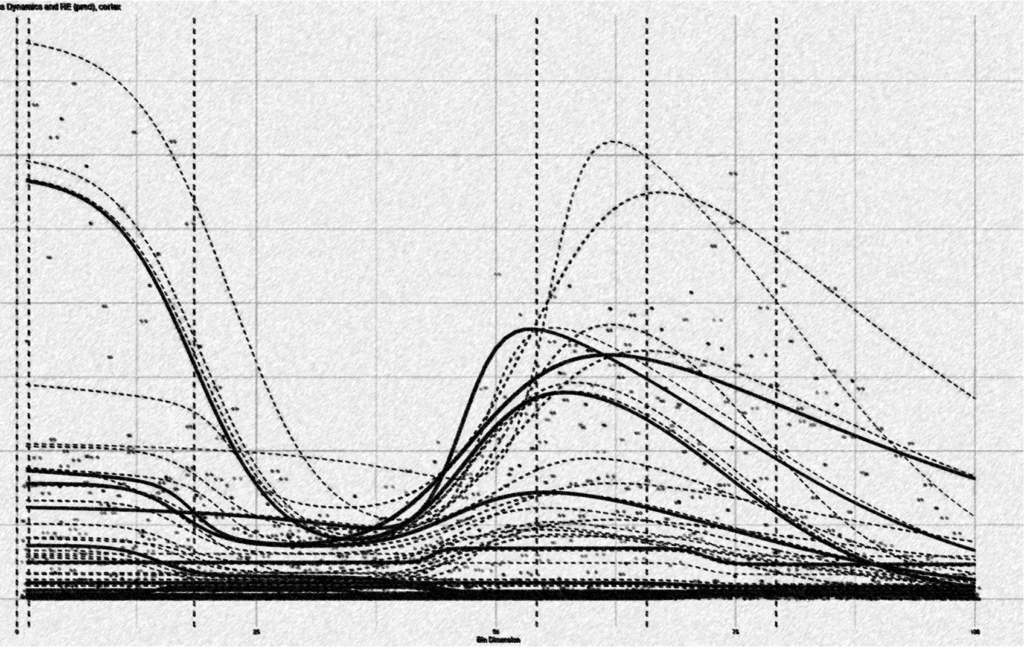

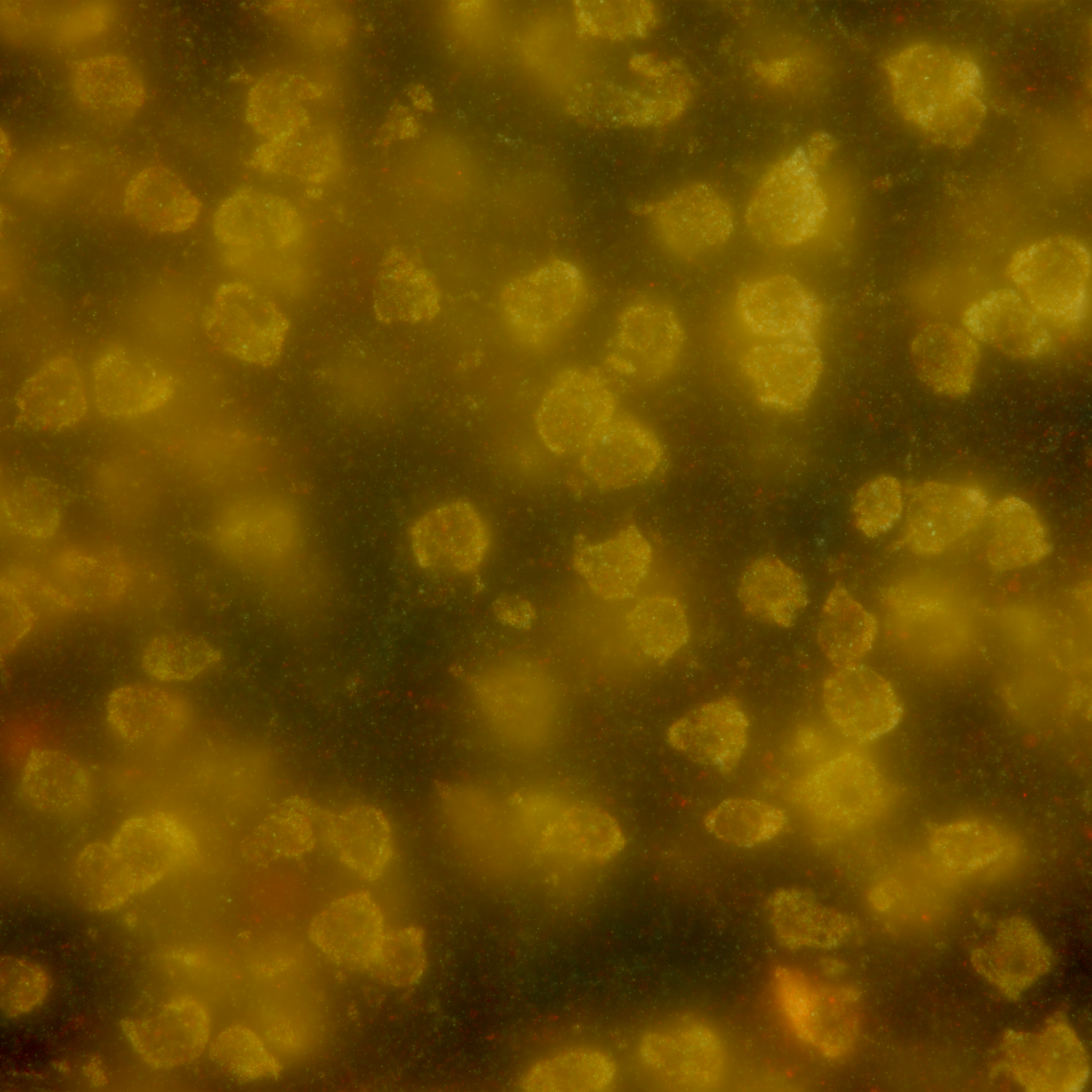

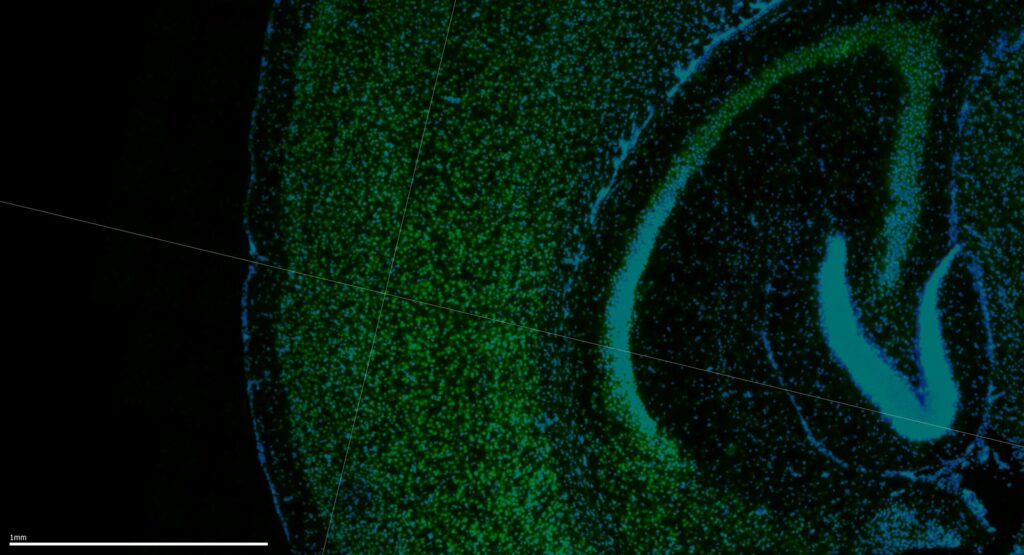

MERFISH accuracy estimation

Improved quality control for MERFISH data

MERFISH technologies like MERSCOPE label luminance spots by decoding barcodes. It’s been known since the original work by the Zhuang Lab [10.1126/science.aaa6090] that more than half of labels can be incorrect, so it’s important to be able to estimate which RNA species in a run are reliable. To meet this need, I’ve developed a new statistical technique for estimating the ratio of mislabeled spots in barcode-based spatial transcriptomics data. Happy to chat with anyone interested in learning more. [WashU Innovation Catalog]

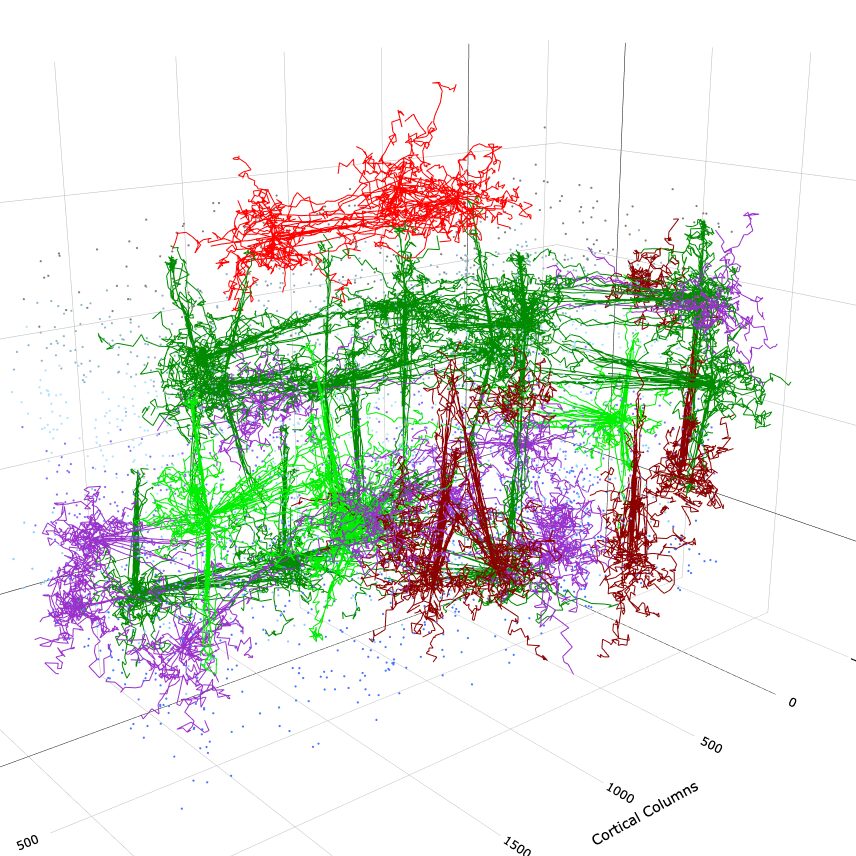

DACx

Digital Auditory Cortex

R/C++ package for running biologically realistic simulations of the mammalian auditory cortex, i.e., a “digital twin”. Network topologies are built from circuit motifs and spiking is simulated via growth-transform.[GitHub][Documentation]

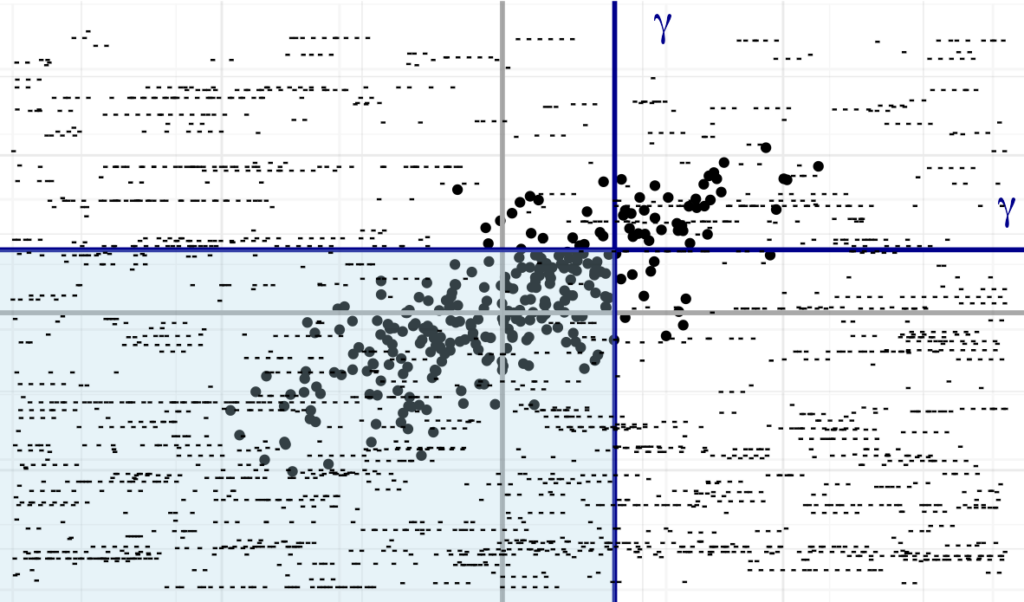

wispack

Modeling gene transcription in space

Implements warped sigmoidal Poisson-process mixed-effects models, a tool for testing for between-group effects on the spatial distribution of genes. [GitHub] [documentation] [paper] [preprint]

neuronsDG package

NeuronsDG package

R/C++ package for modeling single-neuron spiking with autocorrelation and cross correlation, using dichotomized Gaussians. [GitHub] [documentation]

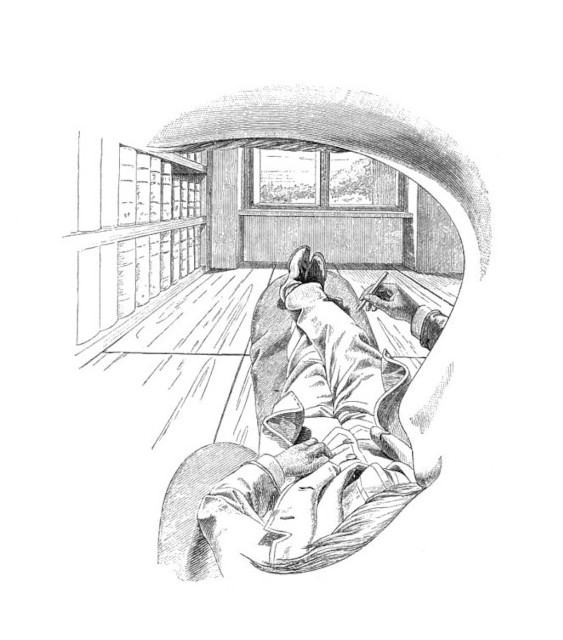

Phenomenology

Philosophical work

I’m interested in how memory and sensory perception interact to afford consciousness of the past and present. My work focuses on experiencing what’s not there (memories, dreams, hallucinations), the feelings of presence and pastness, and the neural correlates of consciousness. Check out this piece and this piece on presence and digital fluency, or this paper on how perception involves experience of the past. I summarize the idea in a blog post. (Image: Self-portrait, Ernst Mach, 1886)

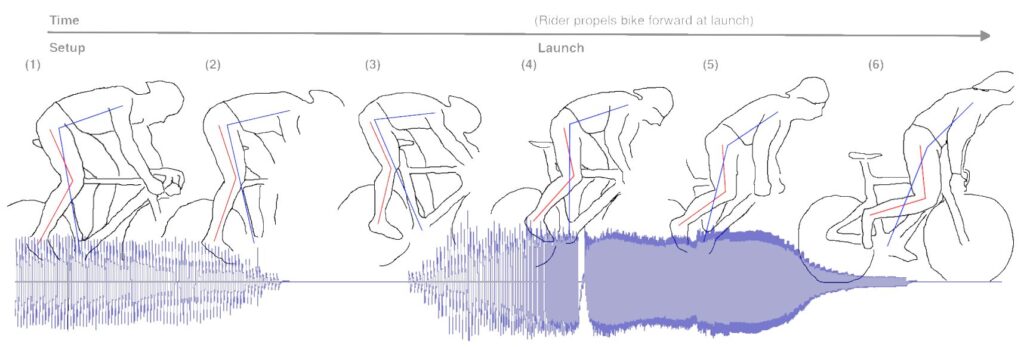

Movement Sonification

Improving bodily awareness with sound

Before my transition to neuroscience I did behavioral psychology experiments on how auditory feedback can augment, replace, and enrich natural proprioception, improving motor learning and motor control in fast, skilled movements. This work involved developing ultra fast wearable embedded sensor systems for movement sonification. I worked with the Cortex M4 and ESP32, developed bare-metal digital sound synthesis techniques (two-timer pulse-width modulation, based off a class-D amplifier), used inertial sensors, designed bespoke PCB feathers (KiCad), and 3D printed enclosures (FreeCAD).

As part of this sonification work I wrote motion processing algorithms for both real-time processing in embedded hardware (C++) and for post-processing (R). This work involved motion detection and segmentation, coordinate transformations, input integration, path comparisons (error estimation), and time-warping (both post-processing dynamic time-warping and real-time online warping estimates).

Coding Portfolio

Modeling Software

- 2026. DACx: Digital auditory cortex, R/C++ package for running biologically realistic simulations of the mammalian auditory cortex, i.e., a “digital twin”. Network topologies are built from circuit motifs and spiking is simulated via growth-transform. [Documentation] (R package, Rcpp, C++)

- 2025. neuronsDG, R/C++ package for estimating auto- and cross-correlation in the spiking of single neurons with dichotomized Gaussians. [documentation] (R package, Rcpp, C++)

- 2025. wispack, warped sigmoidal Poisson-process mixed-effects models for testing for functional spatial effects in spatial transcriptomics data. [documentation] [paper] [preprint] (R package, Rcpp, C++)

- 2024. Hidden-state Bayesian learning, simulated Bayesian reasoner who learns a reach target based on auditory feedback, intended for modeling sonification data. (R scripts, C++)

- 2023. Generalized linear modeling (GLM), demo of task-dependent somatomotor cortex responses from simulated fMRI data. (Python, CoLab)

- 2023. Heuristics-based physical symbol system simulation, instructional demo, algorithm solves a version of the river-crossing problem. (Python, CoLab)

- 2022. Two-pivot reach model for tracking position through Cartesian space from raw gyroscope readings. (C++, Arduino)

Statistics and Data Analysis

- 2025. Bayesian MCMC parameter estimation, instructional demo on how to use Markov-chain Monte Carlo (MCMC) simulations to estimate parameters with Bayes’ rule. (R scrip)

- 2024. Kinematic data analysis, full pipeline, data synchronization, signal filtering, linear mixed-effects modelling, and nonparametric bootstrapping, used for post-processing and statistical significance testing of kinematic and accuracy data (optical and inertial) from motor control study involving reaches with movement sonification. (R scripts)

- 2023. Sentiment analysis with deep neural network, instructional demo performing sentiment analysis of IMDb movie reviews with explanation of network opacity. (Python, CoLab, PyTorch)

- 2023. Linear decoding (logistic regression) of motor tasks from simulated somatomotor cortex fMRI data. (Python, CoLab)

- 2023. Single-layer neural network (McCulloch-Pitts Neuron), instructional demo on learning with the Perceptron Convergence Rule. (Python, CoLab)

Hardware and Embedded Systems

- 2024. Movement sonification hardware code for real-time embedded (wearable) sensor system which tracks motion via inertial sensors at 1kHz while providing auditory feedback with only 1–2ms latency. (C++, Arduino, ESP32)

- 2021. Two-timer pulse-width modulation (class-D amplifier) for low-overhead bare-metal digital sound synthesis on single-core embedded processors. (C++, Arduino, Cortex M4F, ESP32)

Interested in chatting about human perception or movement sonification?